Global Data Quality Management Market Size, Share and Analysis Report Analysis By Component (Software, Services), By Deployment Mode (Cloud-based/SaaS, On-premises), By Organization Size (Large Enterprises, Small and Medium-sized Enterprises), By Data Type (Customer Data, Product Data, Financial Data, Operational Data, Others), By End-User Industry (Banking, Financial Services, and Insurance, Healthcare, Retail & E-commerce, IT & Telecommunications, Manufacturing, Others), By Regional Analysis, Global Trends and Opportunity, Future Outlook By 2025-2035

- Published date: March 2026

- Report ID: 179812

- Number of Pages: 345

- Format:

-

keyboard_arrow_up

Quick Navigation

- Report Overview

- Key Takeaway

- Data Quality Management statistics

- Role of Generative AI

- Investment and Business Benefits

- U.S. Market Size

- Component Analysis

- Deployment Mode Analysis

- Organization Size Analysis

- Data Type Analysis

- End-User Industry Analysis

- Emerging trends

- Growth Factors

- Key Market Segments

- Drivers

- Restraint

- Opportunities

- Challenges

- Key Players Analysis

- Recent Developments

- Report Scope

Report Overview

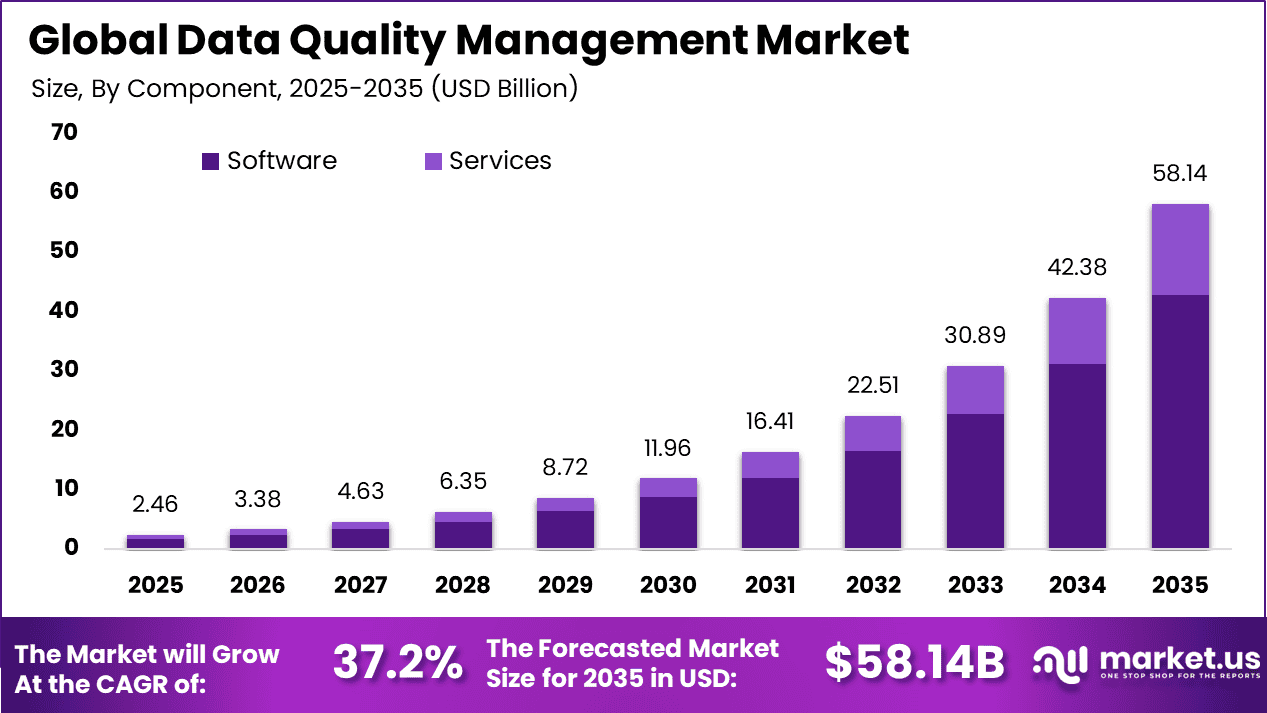

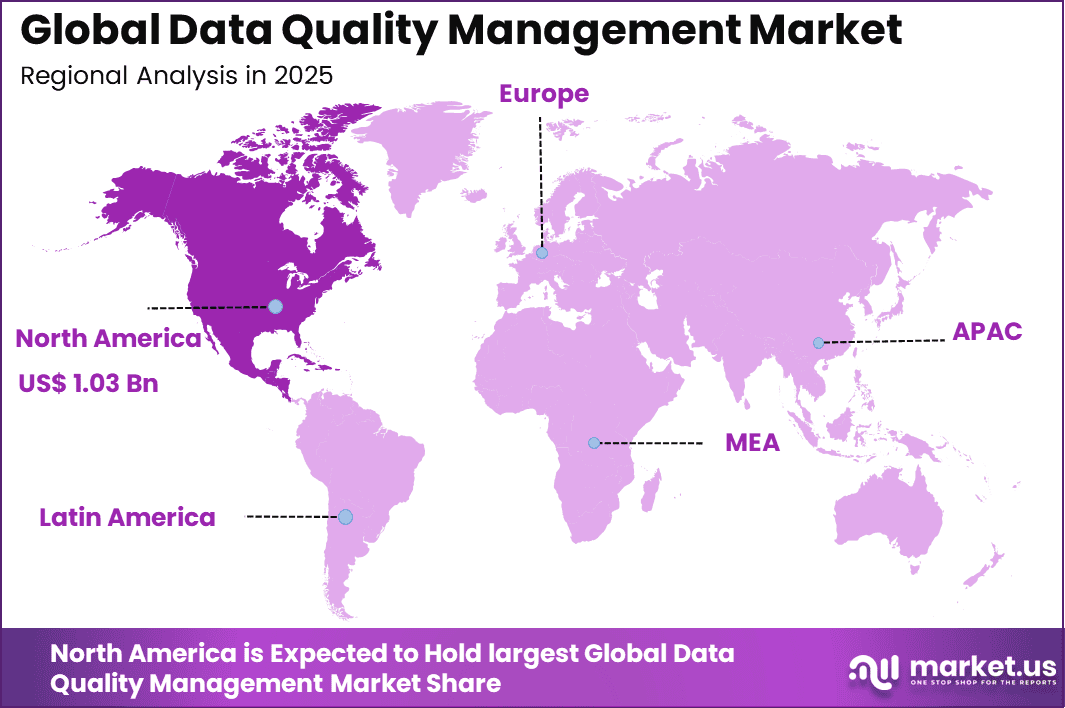

The Global Data Quality Management Market size is expected to be worth around USD 58.14 billion by 2035, from USD 2.46 billion in 2025, growing at a CAGR of 37.2% during the forecast period from 2025 to 2035. North America held a dominant market position, capturing more than a 42.16% share, holding USD 1.03 billion in revenue.

Data Quality Management is the structured process of ensuring data is accurate, complete, consistent, reliable, and timely across an organization. It involves monitoring, cleansing, validating, and governing data throughout its lifecycle. By maintaining high-quality information, businesses improve decision-making, reduce operational risks, enhance customer experiences, and build greater trust in analytics and reporting systems.

Businesses prioritize high data quality because teams spend nearly 80% of their time correcting inaccurate or incomplete information instead of generating insights. Rapid data growth from digital platforms increases error risks across systems. Leadership teams recognize that accurate data strengthens strategic decisions, improves cross-functional trust, and reduces rework costs. Reliable datasets streamline operations, enhance reporting accuracy, and create a stable foundation for sustainable business growth.

The market for Data Quality Management is driven by the growing need for accurate and reliable data across industries. Businesses increasingly rely on clean, consistent information to make informed decisions, improve customer experiences, and support analytics. Rising data volumes, digital transformation initiatives, and the demand for trustworthy AI insights further fuel adoption, making effective data quality practices essential for operational efficiency and strategic success.

Demand rises as organizations realize poor data quality disrupts planning, delays projects, and impacts nearly 70% of operational teams. Sales, finance, and marketing departments increasingly rely on accurate insights to meet performance targets. Data inconsistencies lead to missed revenue opportunities and flawed forecasts. As competition intensifies, companies actively seek robust data management solutions that ensure dependable, timely, and actionable information across enterprise functions.

For instance, in January 2026, Oracle introduced Oracle Data Quality Studio within Autonomous Database, featuring automated profiling and ML-based anomaly detection. Adopted by 50+ U.S. financial firms, it cuts manual cleansing efforts by 40%, driving Oracle’s dominance in autonomous data quality for mission-critical applications.

Key Takeaway

- In 2025, the Software segment led the Global Data Quality Management Market, accounting for 73.8% of total share.

- In 2025, Cloud based and SaaS deployment dominated with a 68.7% market share.

- In 2025, Large Enterprises represented the primary organization size segment, capturing 76.5% of overall demand.

- In 2025, Customer Data emerged as the leading data type, contributing 52.4% of the market.

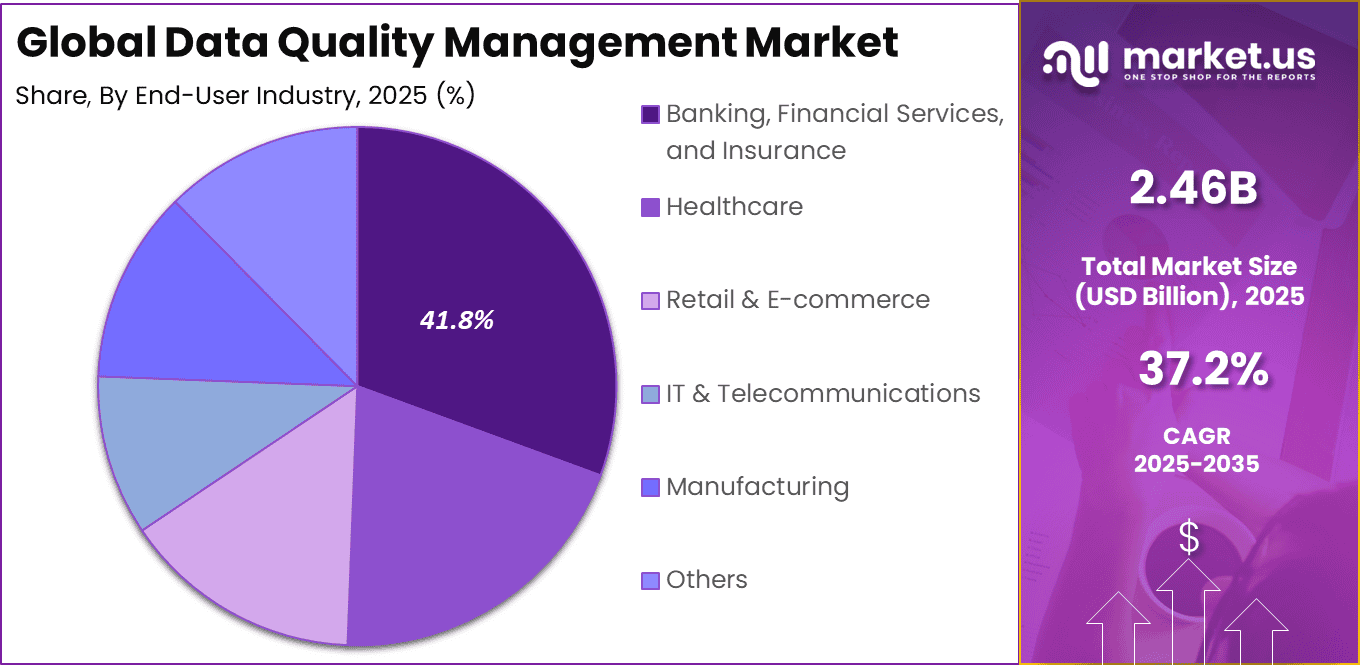

- In 2025, Large Enterprises also held a 41.8% share within the application or end use category.

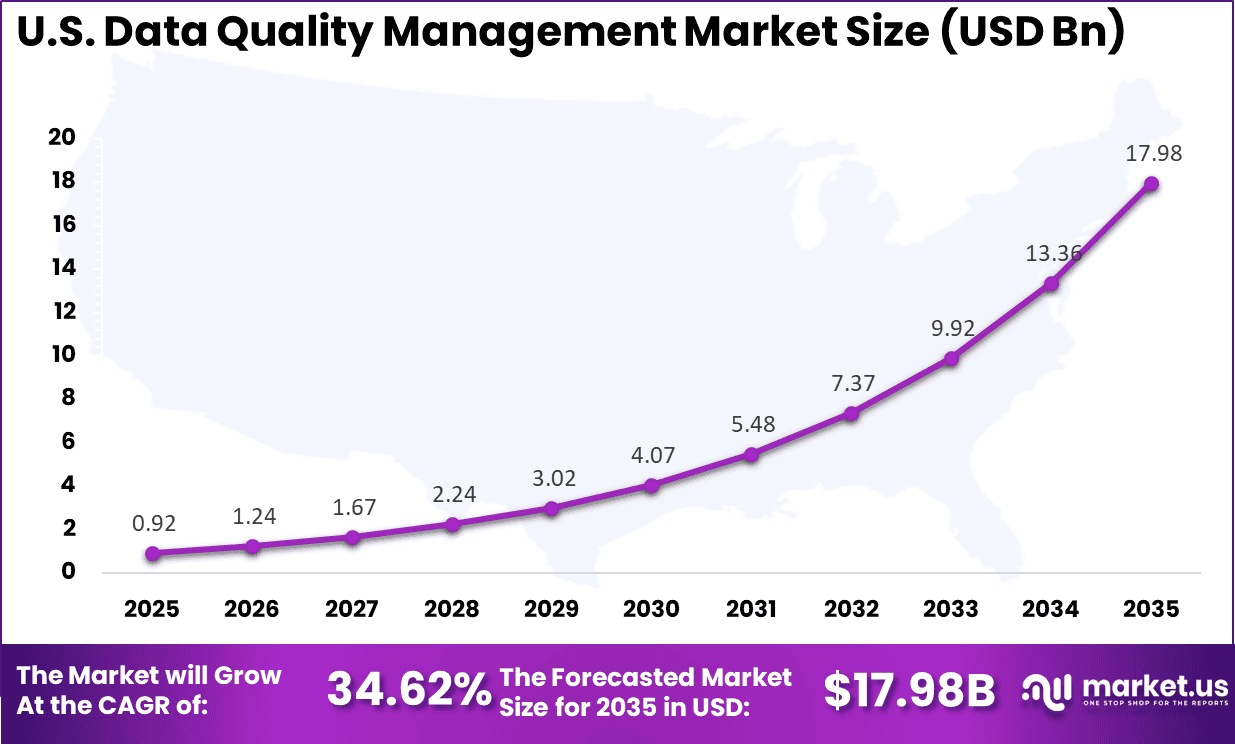

- In 2025, the U.S. Data Quality Management Market reached USD 0.92 billion and recorded a strong growth rate of 34.62%.

- In 2025, North America maintained regional leadership, securing more than 42.16% of the Global Data Quality Management Market.

Data Quality Management statistics

- Poor data quality results in an average annual loss of USD 12.9 million per organization, with total losses for U.S. businesses estimated as high as USD 600 billion per year.

- Approximately 31% of business revenue is negatively affected by data quality issues.

- Data professionals spend up to 80% of their time cleaning and preparing data, leaving only 20% for analysis and insight generation.

- The Global Digital Trust Market size is expected to be worth around USD 436.8 Billion By 2034, from USD 119.9 billion in 2024, growing at a CAGR of 13.8% during the forecast period from 2025 to 2034.

- In 2024, North America held a dominant market position, capturing more than a 38.5% share, holding USD 46.1 Billion revenue.

- Manual data entry error rates range between 0.55% and 26.9%, depending on process complexity and controls.

- Data corruption has been observed in 1 out of every 121 file transfers within research networks.

- Around 74% of organizations report that business stakeholders, rather than automated systems, are the first to detect data quality issues.

- Approximately 74% of organizations have implemented AI based solutions for data quality management, with 33% deploying these tools at enterprise scale.

- More than 95,000 companies worldwide use dedicated data quality management tools.

- About 75% of organizations that strengthened their data quality management practices exceeded their primary business objectives.

Role of Generative AI

Generative AI plays a growing role in Data Quality Management by automatically detecting inconsistencies and filling missing values with high precision. Teams using these tools reduce manual corrections by 40%, as AI generates synthetic datasets to test systems, validate models, and strengthen overall data reliability.

Additionally, generative AI predicts potential data issues before they disrupt reporting processes. This proactive approach improves confidence in analytics outputs and increases real-world data accuracy by 25%. As a result, analysts spend less time troubleshooting errors and more time extracting meaningful business insights.

Investment and Business Benefits

Investment opportunities are strongest in advanced tools that embed data quality directly into analytics workflows. Automated monitoring, validation, and alert systems can reduce correction costs by nearly 50%, delivering measurable returns. Organizations often begin with pilot programs to secure early wins before scaling enterprise-wide. Modernizing outdated systems offers significant efficiency gains and creates competitive advantages for early technology adopters.

High-quality data improves customer understanding, increasing satisfaction and retention by up to 75%. Streamlined processes reduce operational errors and can enhance productivity by around 30%. Accurate insights empower teams to identify growth opportunities, personalize services, and improve performance outcomes. Ultimately, clean and dependable data transforms information into a strategic asset that supports stronger decisions and long-term business success.

U.S. Market Size

The market for Data Quality Management within the U.S. is growing tremendously and is currently valued at USD 0.92 billion; the market has a projected CAGR of 34.62%. The market is expanding rapidly due to rising data volumes across cloud platforms, AI-driven applications, and connected devices.

Organizations are prioritizing accurate, compliant, and real-time data to support analytics, regulatory reporting, and customer personalization. Strong digital transformation initiatives across finance, healthcare, and retail sectors further accelerate adoption. Additionally, increasing cybersecurity concerns and governance requirements are pushing enterprises to invest in advanced data validation and monitoring solutions.

For instance, in February 2026, Oracle introduced Data Quality Studio in Oracle Fusion Cloud, embedding ML-powered lineage tracking that cuts data errors by 40%. This Austin innovation underscores U.S. dominance by enabling autonomous enterprises to achieve golden standards across multicloud deployments.

In 2025, North America held a dominant market position in the Global Data Quality Management Market, capturing more than a 42.16% share, holding USD 1.03 billion in revenue. This dominance is due to its advanced digital infrastructure and early adoption of AI-driven analytics across industries.

Enterprises in the US and Canada heavily invest in cloud computing, big data platforms, and governance frameworks to meet strict regulatory standards. Strong presence of leading technology providers, high awareness of data compliance requirements, and continuous innovation in enterprise software solutions further strengthen the region’s dominant position.

For instance, in February 2026, IBM enhanced North America’s data quality dominance by launching Watsonx.data Quality Suite, integrating AI-driven profiling and real-time cleansing for enterprise data lakes. This innovation strengthens compliance with U.S. regulations like CCPA, solidifying IBM’s leadership in scalable DQM solutions across finance and healthcare sectors.

Component Analysis

In 2025, Software accounts for 73.8% of the Data Quality Management Market, reflecting its central role in managing data accuracy, validation, and governance across enterprise systems. Organizations are increasingly implementing automated profiling, cleansing, and monitoring tools to reduce errors and maintain consistent data standards. As digital operations expand, software platforms are being embedded within core IT environments to ensure continuous quality checks across multiple databases.

The dominance of software is also supported by its integration capabilities with analytics systems, data warehouses, and operational applications. Enterprises require configurable rule engines and real time dashboards to track data health indicators. As regulatory expectations and reporting requirements increase, reliance on robust data quality software solutions continues to strengthen.

Deployment Mode Analysis

In 2025, the Cloud based and SaaS deployment models represent 68.7% of the market, driven by scalability and operational flexibility. Organizations are prioritizing cloud environments to manage large data volumes without heavy infrastructure investment. Centralized governance across distributed teams has become more efficient through cloud based quality management platforms.

The subscription based structure of SaaS solutions enables predictable cost management and regular feature updates. Integration with cloud data lakes and business intelligence tools has further accelerated adoption. Enhanced security standards and compliance certifications have improved enterprise confidence in cloud deployments.

For instance, in February 2026, Precisely launched AI agents in its Data Integrity Suite for cloud data quality and enrichment. These agents speed up normalization and rule creation via chat, blending AI smarts with human checks. This fits cloud trends perfectly, making SaaS more accessible and efficient for handling massive data flows in hybrid setups.

Organization Size Analysis

In 2025, The Large enterprises account for 76.5% of market adoption due to the scale and complexity of their data ecosystems. These organizations operate across multiple business units and geographies, generating significant volumes of structured and unstructured data. Formal data governance programs are therefore being implemented to maintain accuracy and compliance.

The presence of dedicated data management teams and chief data officers within large enterprises has accelerated investment in enterprise wide quality frameworks. Complex legacy systems and hybrid infrastructures require structured monitoring to prevent inconsistencies. As business processes become increasingly data driven, large enterprises remain the primary adopters of advanced quality management solutions.

For Instance, in February 2026, Ataccama earned top marks for enterprise strategy in Forrester. Their end-to-end platform handles massive data for AI trust, with lineage tracing issues across teams. It propels large enterprise dominance by supporting global complexity and regulatory demands effectively.

Data Type Analysis

In 2025, The Customer data represents 52.4% of the market focus, underscoring its importance in revenue generation and compliance management. Accurate customer records are essential for personalized engagement, transaction processing, and regulatory reporting. Data quality tools are being deployed to eliminate duplication and ensure consistent master records.

The growth of digital channels has increased the volume and variety of customer information collected by organizations. Maintaining a unified and reliable customer profile has become a strategic requirement. As enterprises strengthen their customer experience strategies, investments in customer data validation and governance continue to expand.

For Instance, in February 2026, Experian highlighted its Three Pillars of Trust, focusing on data quality tech like AI for customer data governance. Tools handle large volumes scalably in clouds and lakes. This approach grows the segment by ensuring accurate customer insights for personalization and compliance amid rising privacy demands.

End-User Industry Analysis

In 2025, The Banking, Financial Services, and Insurance sector holds 41.8% of the market share due to strict regulatory and reporting obligations. Financial institutions require highly accurate datasets to support risk assessment, fraud detection, and compliance audits. Data quality management platforms are used to reconcile transaction records and validate financial reporting.

High transaction volumes and sensitive customer information increase operational risks in this sector. Errors in data processing can result in financial penalties and reputational impact. As regulatory scrutiny intensifies, structured data governance and quality assurance remain critical priorities for financial institutions.

For Instance, in October 2025, Oracle deepened ties with Informatica for cloud data quality on its infrastructure, targeting finance workloads. Features include fast cleansing for Exadata and HANA. BFSI sectors gain from compliant, high-speed processing to fight fraud and meet regs, fueling industry-specific tool demand.

Emerging trends

A significant emerging trend in the Data Quality Management market is the automation of quality processes using artificial intelligence and machine learning. Nearly 60% of organizations now prioritize live data monitoring for applications such as fraud detection and instant decision systems, ensuring information remains accurate and reliable as it flows continuously.

Organizations are beginning to apply automated data profiling, anomaly detection and rule enforcement to address quality issues without extensive manual intervention. These capabilities are increasingly necessary as data volumes and complexity grow, helping teams detect issues in real time and maintain quality across diverse systems.

Another emerging trend involves integrating quality frameworks into broader data operations practices so that quality checks occur at the point of data creation and ingestion rather than only during periodic audits. This shift supports proactive quality management, reducing the cost and impact of errors downstream in analytics and reporting processes.

Growth Factors

Growth in Data Quality Management is driven by the surge in data generated from IoT devices and cloud platforms. More than 70% of market expansion is linked to managing this growing volume, as traditional manual approaches struggle to maintain accuracy, speed, and consistency across complex systems.

Another major factor is the demand for trustworthy AI outcomes, with 50% of business leaders identifying reliable data as essential for strategic success. High-quality data reduces costly errors, strengthens predictive models, and enables organizations to build smarter, insight-led business strategies.

Key Market Segments

By Component

- Software

- Data Profiling & Discovery

- Data Cleansing & Standardization

- Data Matching & Deduplication

- Data Monitoring & Auditing

- Master Data Management Support

- Others

- Services

- Professional Services

- Implementation & Integration

- Consulting & Managed Services

- Support & Maintenance

- Others

- Professional Services

By Deployment Mode

- Cloud-based/SaaS

- On-premises

By Organization Size

- Large Enterprises

- Small and Medium-sized Enterprises

By Data Type

- Customer Data

- Product Data

- Financial Data

- Operational Data

- Others

By End-User Industry

- Banking, Financial Services, and Insurance

- Healthcare

- Retail & E-commerce

- IT & Telecommunications

- Manufacturing

- Others

Key Regions and Countries

North America

- US

- Canada

Europe

- Germany

- France

- The UK

- Spain

- Italy

- Russia

- Netherlands

- Rest of Europe

Asia Pacific

- China

- Japan

- South Korea

- India

- Australia

- Singapore

- Thailand

- Vietnam

- Rest of APAC

Latin America

- Brazil

- Mexico

- Rest of Latin America

Middle East & Africa

- South Africa

- Saudi Arabia

- UAE

- Rest of MEA

Drivers

Growing Need for Accurate Data

The need for accurate and reliable data continues to drive the adoption of data quality management solutions. Businesses across sectors increasingly rely on data for decision-making, planning, and operational strategies. Inaccurate or inconsistent data can lead to flawed insights, operational errors, and lost opportunities, making effective data quality practices essential for maintaining competitiveness and efficiency.

As organizations handle large volumes of information from multiple sources, ensuring data clarity and consistency becomes critical. High-quality data allows teams to perform analytics confidently, enhance customer experiences, and make strategic decisions without disruptions. This growing reliance on trustworthy information strengthens the market demand for comprehensive data quality solutions.

For instance, in February 2026, IBM Watsonx.data intelligence advances augmented data quality with AI-assisted rule creation and automated remediation, helping firms manage trust across data lifecycles amid rising AI demands. This tackles messy data from diverse sources, ensuring reliable inputs for operations and decisions in complex environments.

Restraint

High Implementation Costs

High implementation costs remain a key barrier for organizations considering data quality management solutions. Initial expenses for software licensing, system deployment, and integration with existing IT infrastructure can be substantial, particularly for smaller companies. Many organizations struggle to justify these investments when immediate financial returns are not clearly visible.

Additionally, ongoing maintenance and operational costs add to the financial burden. Organizations need trained personnel to manage these systems and ensure they function effectively, which further increases the total cost of ownership. These financial constraints slow down adoption despite recognition of the long-term benefits of high-quality data.

For instance, in January 2026, Oracle Database 26ai brings AI right into databases to avoid pricey data moves and extra platforms, easing setup costs for quality management. Firms save on pipelines while keeping security tight, making it less burdensome to start.

Opportunities

Adoption of AI

The integration of artificial intelligence into data quality management presents a major opportunity for organizations. AI can automate processes like data cleansing, anomaly detection, and enrichment, reducing manual work and increasing efficiency. Intelligent systems also adapt over time, improving their accuracy and reliability as they process more information.

AI-driven solutions enable real-time validation and predictive quality checks, allowing organizations to identify and resolve data issues before they impact operations. This capability helps businesses manage complex data environments effectively, supporting more confident decision-making and operational resilience.

For instance, in January 2026, Ataccama launches agentic AI in the ONE platform to automate data checks and observability, grabbing the chance to blend AI for faster quality in AI pipelines. It cuts prep time from days to hours, boosting trust for enterprise AI use.

Challenges

Ensuring Compliance and Security

Meeting regulatory requirements and maintaining data security presents a significant challenge in data quality management. Organizations must ensure that their data practices comply with evolving privacy laws while still maintaining accuracy, completeness, and accessibility.

Security concerns further complicate implementation, as companies must protect sensitive information against breaches and unauthorized access while performing quality checks. Balancing compliance, security, and data integrity requires careful planning, robust controls, and ongoing monitoring to ensure systems remain reliable and secure.

For instance, in February 2026, SAS boosts AI governance at Innovate 2025 with synthetic data tools for regulated sectors, validating reliability. Compliance stays tough as firms handle privacy in scarce data scenarios, needing strong safeguards for real-world use.

Key Players Analysis

The Data Quality Management Market is led by established enterprise software providers with deep analytics and integration capabilities. IBM Corporation, SAP SE, and Oracle Corporation offer comprehensive data governance and master data management platforms. Their solutions are widely adopted across banking, healthcare, and retail sectors where regulatory compliance and accurate reporting are critical. Strong global service networks and cloud deployment models reinforce their leadership positions.

Specialized data quality vendors contribute strong profiling, cleansing, and enrichment tools. Informatica Inc., SAS Institute Inc., and Talend S.A. focus on automated validation, metadata management, and AI enabled anomaly detection. These providers emphasize real time data monitoring and integration with modern data lakes. Their platforms support large enterprises that require high accuracy across complex multi source environments.

Niche and emerging players strengthen competition through targeted innovation. Ataccama Corporation, Experian plc, Precisely, and Syniti deliver focused data integrity and enrichment solutions. Vendors such as Trillium Software, Data Ladder, Innovative Systems, Inc., Melissa, and DQ Global compete through flexible pricing and industry specific solutions. The competitive landscape remains moderately fragmented, with differentiation driven by automation capabilities, regulatory alignment, and integration with cloud native architectures.

Top Key Players in the Market

- IBM Corporation

- Informatica Inc.

- SAP SE

- Oracle Corporation

- SAS Institute Inc.

- Talend S.A.

- Ataccama Corporation

- Experian plc

- Precisely

- Syniti

- Trillium Software

- Data Ladder

- Innovative Systems, Inc.

- Melissa

- DQ Global

- Others

Recent Developments

- In October 2025, SAP launched enhanced AI-powered data quality features in SAP Datasphere, focusing on real-time cleansing and lineage for cloud analytics. The update targets North American enterprises, improving data trust by 30% in customer deployments and reinforcing SAP’s position in unified data management.

- In September 2025, Talend partnered with Snowflake to deliver native data quality in the Snowflake ecosystem, supporting automated pipelines for 100+ joint customers. The collaboration accelerates clean data delivery for AI, positioning Talend strongly in cloud-native quality management.

Report Scope

Report Features Description Market Value (2025) USD 2.4 Bn Forecast Revenue (2035) USD 58.1 Bn CAGR (2026-2035) 37.2% Base Year for Estimation 2025 Historic Period 2020-2024 Forecast Period 2026-2035 Report Coverage Revenue forecast, AI impact on Market trends, Share Insights, Company ranking, competitive landscape, Recent Developments, Market Dynamics and Emerging Trends Segments Covered By Component (Software, Services), By Deployment Mode (Cloud-based/SaaS, On-premises), By Organization Size (Large Enterprises, Small and Medium-sized Enterprises), By Data Type (Customer Data, Product Data, Financial Data, Operational Data, Others), By End-User Industry (Banking, Financial Services, and Insurance, Healthcare, Retail & E-commerce, IT & Telecommunications, Manufacturing, Others) Regional Analysis North America – US, Canada; Europe – Germany, France, The UK, Spain, Italy, Russia, Netherlands, Rest of Europe; Asia Pacific – China, Japan, South Korea, India, New Zealand, Singapore, Thailand, Vietnam, Rest of Latin America; Latin America – Brazil, Mexico, Rest of Latin America; Middle East & Africa – South Africa, Saudi Arabia, UAE, Rest of MEA Competitive Landscape IBM Corporation, Informatica Inc., SAP SE, Oracle Corporation, SAS Institute Inc., Talend S.A., Ataccama Corporation, Experian plc, Precisely, Syniti, Trillium Software, Data Ladder, Innovative Systems, Inc., Melissa, DQ Global, Others Customization Scope Customization for segments, region/country-level will be provided. Moreover, additional customization can be done based on the requirements. Purchase Options We have three license to opt for: Single User License, Multi-User License (Up to 5 Users), Corporate Use License (Unlimited User and Printable PDF)  Data Quality Management MarketPublished date: March 2026add_shopping_cartBuy Now get_appDownload Sample

Data Quality Management MarketPublished date: March 2026add_shopping_cartBuy Now get_appDownload Sample -

-

- IBM Corporation

- Informatica Inc.

- SAP SE

- Oracle Corporation

- SAS Institute Inc.

- Talend S.A.

- Ataccama Corporation

- Experian plc

- Precisely

- Syniti

- Trillium Software

- Data Ladder

- Innovative Systems, Inc.

- Melissa

- DQ Global

- Others